This page describes the architecture of the node-manager module for CloudEphemeral nodes.

Module architecture

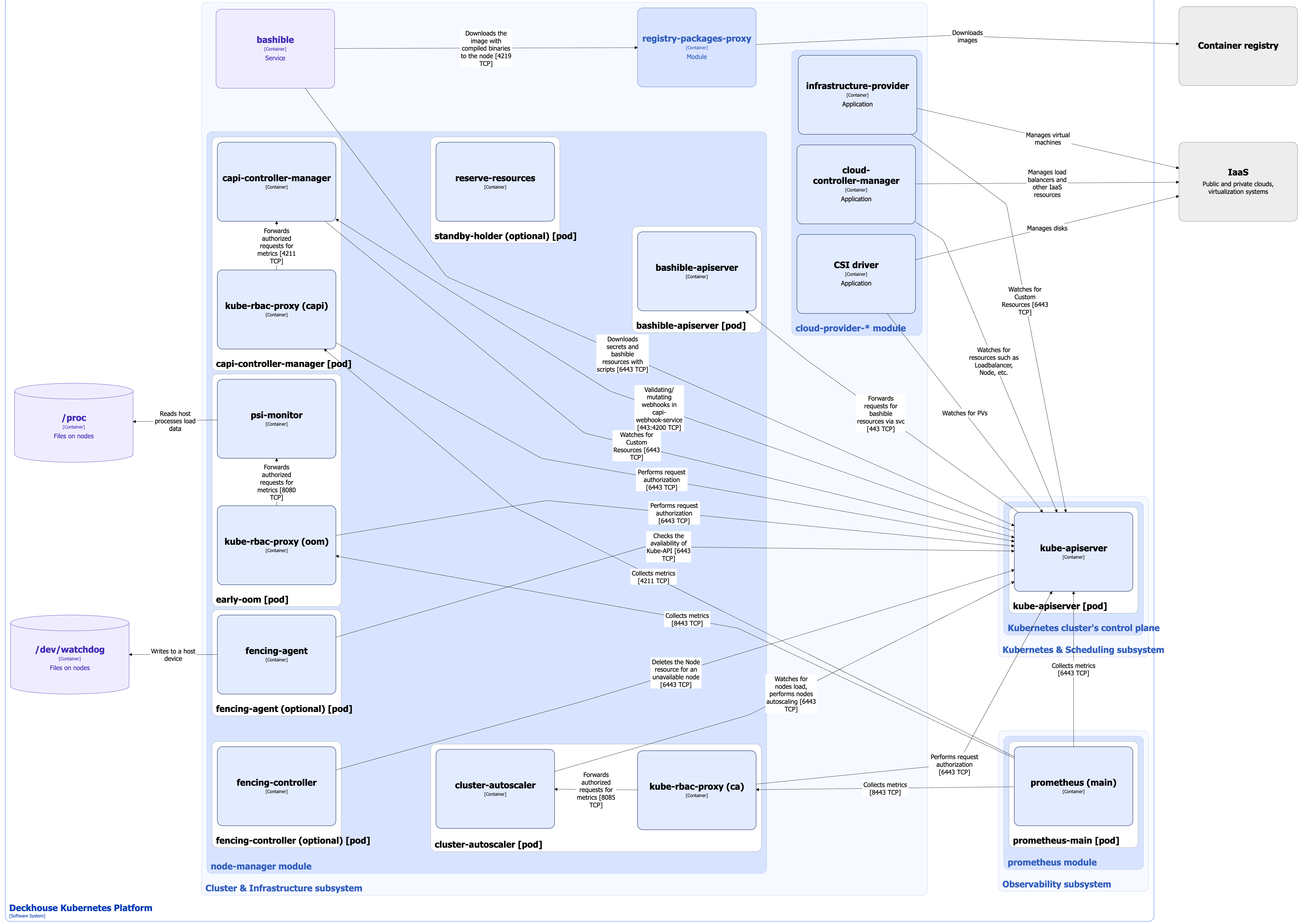

The following simplifications are made in the diagram:

- The diagram shows containers in different pods interacting directly with each other. In reality, they communicate via the corresponding Kubernetes Services (internal load balancers). Service names are omitted if they are obvious from the diagram context. Otherwise, the Service name is shown above the arrow.

- Pods may run multiple replicas. However, each pod is shown as a single replica in the diagram.

The Level 2 C4 architecture of the node-manager module and its interactions with other Deckhouse Kubernetes Platform (DKP) components are shown in the following diagram:

Module components

Bashible is a key component of the Cluster & Infrastructure subsystem that enables the operation of the node-manager module. However, it is not part of the module itself, as it runs at the OS level as a system service. For Bashible details, refer to the corresponding documentation section.

The module managing CloudEphemeral nodes consists of the following components:

-

Bashible-api-server: A Kubernetes Extension API Server deployed on master nodes. It generates bashible scripts from templates stored in custom resources. When kube-apiserver receives a request for resources containing bashible bundles, it forwards the request to bashible-api-server and returns the generated result. For more details about bashible and bashible-api-server, refer to the corresponding documentation section.

-

Capi-controller-manager (Deployment): Core controllers from the Kubernetes Cluster API project. Cluster API extends Kubernetes to manage clusters as custom resources within another Kubernetes cluster. The capi-controller-manager pod consists of the following containers:

- control-plane-manager: Main container.

- kube-rbac-proxy: Sidecar container providing an RBAC-based authorization proxy for secure access to controller metrics.

-

Cluster-autoscaler (Deployment): An additional Kubernetes component that automatically adjusts the number of nodes in the cluster based on workload. For more details, refer to the node management documentation section.

The component includes:

- cluster-autoscaler: Main container.

- kube-rbac-proxy: Sidecar container providing an RBAC-based authorization proxy for secure access to the cluster-autoscaler metrics.

-

Early-oom (DaemonSet): A pod deployed on every node. It reads resource load metrics from

/procand terminates pods under high load before kubelet does. Enabled by default, but can be disabled in the module configuration if it causes issues for normal node operation.Includes the following containers:

- psi-monitor: Monitors the PSI (Pressure Stall Information) metric, which reflects how long processes wait for resources such as CPU, memory, or I/O.

- kube-rbac-proxy: Sidecar container providing an RBAC-based authorization proxy for secure access to the early-oom metrics.

-

Fencing-agent (DaemonSet): Deployed to a specific node group when the

spec.fencingparameter of the NodeGroup custom resource is enabled.After startup, the agent activates the Watchdog timer and sets the label

node-manager.deckhouse.io/fencing-enabledon the node. The agent periodically checks Kubernetes API availability. If the API is reachable, it sends a signal to the Watchdog, resetting the timer. It also monitors maintenance labels on the node and enables or disables Watchdog accordingly.The Linux kernel softdog module is used as the Watchdog with parameters

soft_margin=60andsoft_panic=1. This means the timeout is 60 seconds. If the timeout expires, a kernel panic occurs, and the node remains in that state until manually rebooted.Consists of a single container:

- fencing-agent: Performs the checks described above and writes to

/dev/watchdogto signal the Watchdog.

- fencing-agent: Performs the checks described above and writes to

-

Fencing-controller: A controller that watches all nodes labeled with

node-manager.deckhouse.io/fencing-enabled.If a node is unavailable for more than 60 seconds, the controller deletes all pods from that node and then removes the node itself.

-

Standby-holder (Deployment): A pod used to reserve nodes. When the

spec.cloudinstances.standbyparameter is enabled in the NodeGroup custom resource, standby nodes are created in all configured zones.A standby node is a cluster node with pre-reserved resources available for immediate scaling. This allows cluster-autoscaler to schedule workloads without waiting for node initialization, which may take several minutes.

The standby-holder pod does not perform useful work. It simply reserves resources to prevent cluster-autoscaler from deleting temporarily unused nodes.

The pod has the lowest PriorityClass and is evicted when real workloads are scheduled. For details on pod priority and preemption, refer to the Kubernetes documentation.

The pod includes a single container reserve-resources.

Module interactions

The module interacts with the following components:

-

Kube-apiserver:

- Retrieves the

kube-system/d8-node-manager-cloud-providerSecret for cloud connectivity. - Works with Cluster API custom resources.

- Manages Node resources.

- Monitors node load.

- Performs node autoscaling.

- Authorizes metric requests.

- Retrieves the

-

Node filesystem:

/proc: Reads PSI metrics for OOM handling./dev/watchdog: Sends signals to reset the Watchdog timer.

The module interacts with the cloud-provider module via kube-apiserver using the kube-system/d8-node-manager-cloud-provider Secret to obtain cloud connection settings and create CloudEphemeral nodes. The cloud-provider module also provides provider-specific Cluster API custom resource templates to node-manager.

The following external components interact with the module:

-

Kube-apiserver:

- Executes mutating and validating webhooks of capi-controller-manager.

- Forwards requests for bashible resources to bashible-api-server.

-

Prometheus-main:

- Collects metrics from

node-managermodule components.

- Collects metrics from

Architecture features specific to CloudEphemeral nodes

- Nodes are ephemeral and automatically created and deleted by the module.

- A configured cloud provider module (

cloud-provider-*) is required for interaction with cloud infrastructure. It also includes csi-driver and cloud-controller-manager. - Capi-controller-manager manages the lifecycle of the cluster and its nodes through higher-level custom resources, without directly provisioning infrastructure. It generates infrastructure-specific custom resources, leaving provisioning to the

cloud-providermodule. - Cluster-autoscaler enables node autoscaling.

- Node reservation is supported.