The cloud-provider-vcd module is responsible for interacting with the VMware Cloud Director cloud resources. It allows the node-manager module to use VMware Cloud Director resources for provisioning nodes for the specified node group.

For more details about the module configuration, refer to the corresponding documentation section.

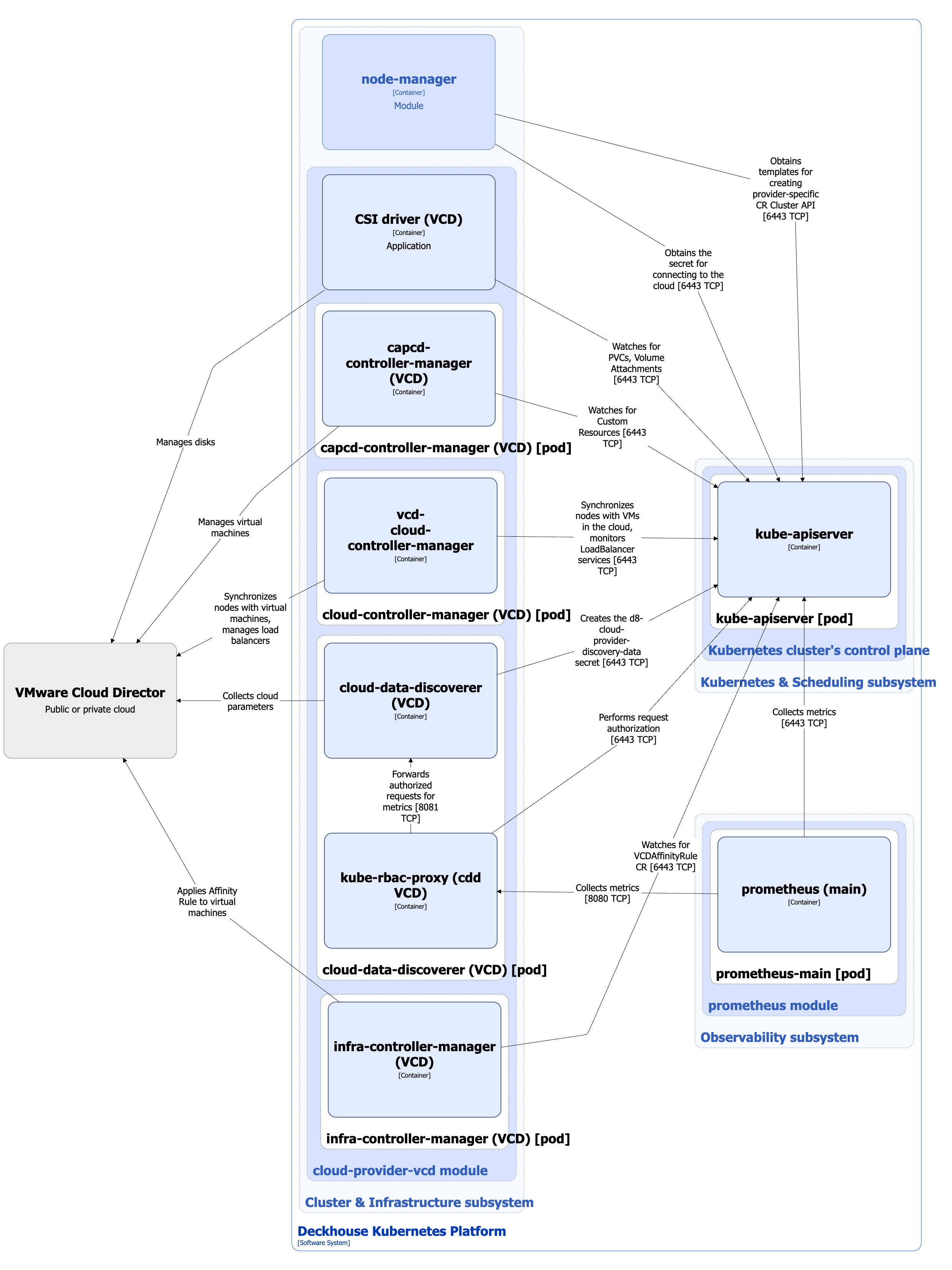

Module architecture

The following simplifications are made in the diagram:

- The diagram shows containers in different pods interacting directly with each other. In reality, they communicate via the corresponding Kubernetes Services (internal load balancers). Service names are omitted if they are obvious from the diagram context. Otherwise, the Service name is shown above the arrow.

- Pods may run multiple replicas. However, each pod is shown as a single replica in the diagram.

The Level 2 C4 architecture of the cloud-provider-vcd module and its interactions with other components of Deckhouse Kubernetes Platform (DKP) are shown in the following diagram:

Module components

The module consists of the following components:

-

Capcd-controller-manager: Kubernetes Cluster API Provider Cloud Director. Cluster API is an extension for Kubernetes that allows you to manage Kubernetes clusters as custom resources inside another Kubernetes cluster. Cluster API Provider allows clusters running the Cluster API to order virtual machines in the cloud provider’s infrastructure, VMware Cloud Director in this case. Capcd-controller-manager works with the following custom resources:

- VCDClusterTemplate: Template describing the characteristics of the cluster created in the cloud.

- VCDCluster: Description of the characteristics of a cluster created based on VCDClusterTemplate.

- VCDMachineTemplate: Template describing the characteristics of the machines created in the cloud.

- VCDMachine: Description of the characteristics of a machine created based on VCDMachineTemplate.

It consists of a single container:

- capcd-controller-manager.

-

Cloud-controller-manager: Kubernetes External Cloud Provider for VMware Cloud Director. It is an implementation of cloud controller manager for VMware Cloud Director. It provides interaction with the VMware Cloud Director cloud and performs the following functions:

-

Implements a 1:1 relationship between a Node resource in Kubernetes and a VM in a cloud provider. To do this:

- It fills the

spec.providerIdandNodeInfofields of the Node resource. - It checks for a VM in the cloud and deletes the Node resource in the cluster if it is missing.

- It fills the

-

When creating a LoadBalancer Service resource in Kubernetes, it creates a load balancer in the cloud that routes traffic from outside into the cluster nodes.

For more details about cloud-controller-manager, refer to the Kubernetes documentation.

It consists of a single container:

- vcd-cloud-controller-manager.

-

-

Cloud-data-discoverer: It is responsible for collecting data from the cloud provider’s API and providing it as a

kube-system/d8-cloud-provider-discovery-dataSecret. This secret contains the parameters of a specific cloud used by other components of thecloud-provider-vcdmodule.It consists of the following containers:

- cloud-data-discoverer: Main container.

- kube-rbac-proxy: Sidecar container providing an RBAC-based authorization proxy for secure access to the cloud-data-discoverer metrics.

-

Infra-controller-manager: It is responsible for managing the schedulling of node location relative to each other at the hypervisor level, which can help improve fault tolerance, and control the distribution of workloads. Infra-controller-manager works with a custom resource VCDAffinityRule. It specifies the affinity rule applied only to virtual machines of node groups referencing this instance class within the cluster.

It consists of a single container:

- infra-controller-manager.

-

CSI driver (VCD): It is an implementation of the CSI driver for VMware Cloud Director. To study the

cloud-provider-*CSI driver typical architecture, refer to the corresponding documentation section.Cloud-provider-vcdmodule uses CSI driver for VMware Cloud Director Named Independent Disks.CSI driver (VCD) does not support snapshots. For this reason, the

csi-controllerPod does not include the snapshotter (external-snapshotter) sidecar container.

Module interactions

The module interacts with the following components:

-

Kube-apiserver:

- Watches for PersistentVolumeClaim and VolumeAttachment custom resources.

- Reconciles VCDClusterTemplate, VCDCluster, VCDMachineTemplate, VCDMachine, and VCDAffinityRule custom resources.

- Creates the

kube-system/d8-cloud-provider-discovery-dataSecret. - Synchronizes Kubernetes nodes with cloud VMs.

- Watches for LoadBalancer services.

- Authorizes the requests for metrics.

-

VMware Cloud Director:

- Collects cloud parameters.

- Manages virtual machines.

- Applies Affinity Rules to virtual machines.

- Gets

ProviderIdand other information about the VMs that are cluster nodes. - Manages load balancers.

- Manages disks.

The following external components interact with the module:

- Prometheus-main: Collects cloud-data-discoverer metrics.

Indirect interactions:

-

The

cloud-provider-vcdmodule providesnode-managerwith following artifacts:- Provider-specific Cluster API custom resource templates to be used by

cloud-provider-vcdto create VMs in the cloud. - The

kube-system/d8-node-manager-cloud-providerSecret, which contains all the necessary settings to connect to the cloud and to create CloudEphemeral nodes. These settings are registered in the provider-specific Cluster API custom resources created based on the templates mentioned above.

- Provider-specific Cluster API custom resource templates to be used by

-

The

cloud-provider-vcdmodule provides Terraform/OpenTofu components for VMware Cloud Director cloud used when building thedhctlexecutable file for theterraform-managermodule, such as:- Terraform/OpenTofu provider.

- Terraform modules.

-

Layouts: Set of cloud placement schemes, which define how the basic infrastructure is created, how and with which additional characteristics should nodes be created for this placement. For example, for one scheme, nodes may have public IP addresses, but they will not for the other. Each layout should have three modules:

base-infrastructure: Basic infrastructure (for example, creation of networks), can also be emptymaster-nodestatic-node.